What I Learned About AI at Stanford

I was sitting with a rapt audience at Stanford’s new-to-me Computing and Data Science (CoDa) building on a Saturday morning, two days into a volunteer leadership event, when I heard one of the best talks about the state of AI.

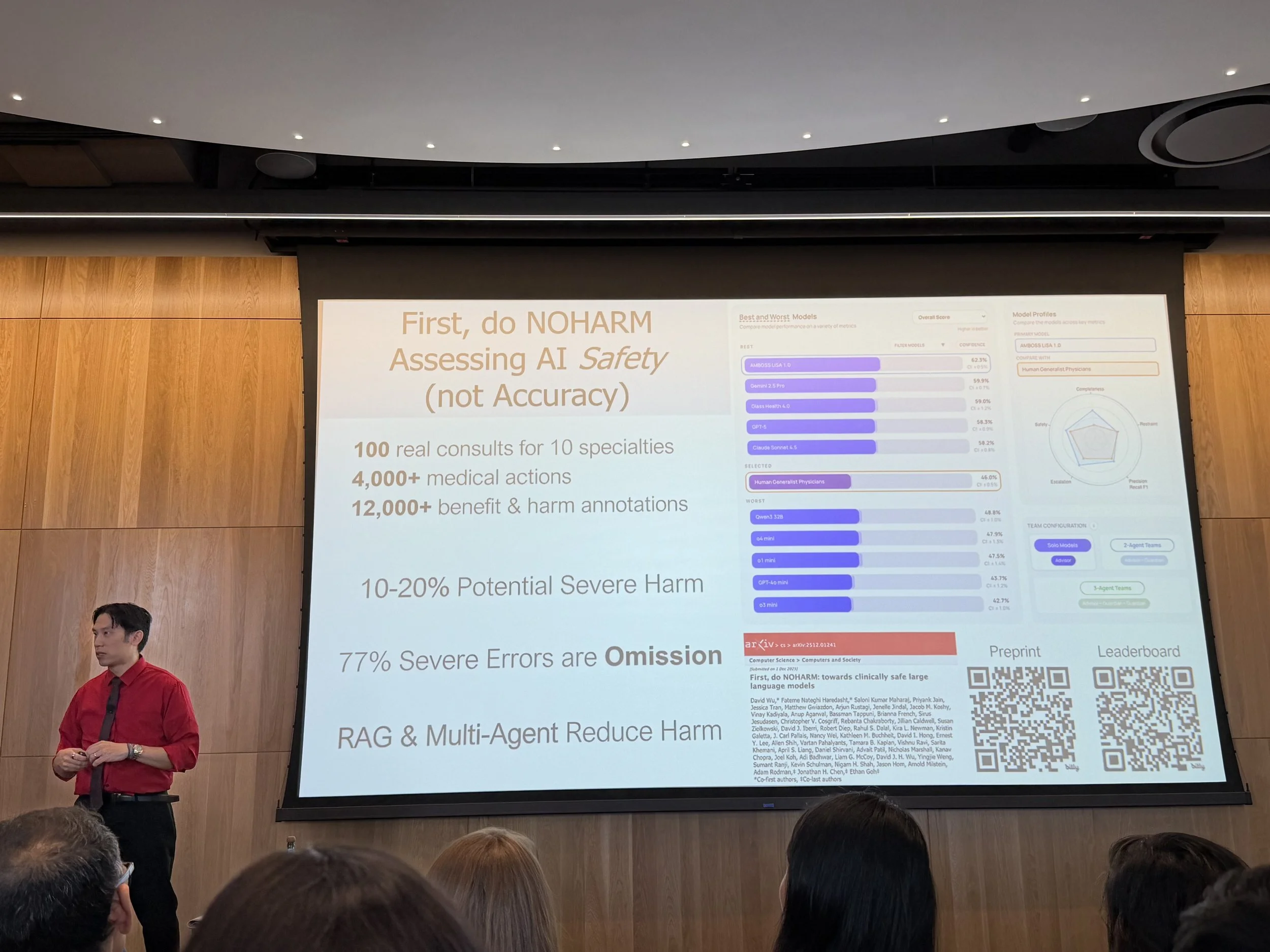

Dr. Jonathan Chen discussing AI safety in medicine

The keynote was called AI in Medicine: Integrated Intelligence or Illusory Limitations? The speaker was Dr. Jonathan Chen, a professor of medicine and computer scientist, plus a talented magician. Yes, he showed lots of fascinating slides on AI safety in medicine. But what I took away from the talk was a better understanding of human beings when exposed to AI — what we do when we're overwhelmed and scared, and what happens when we have agency to choose to be better.

Dr. Chen shared stories from students and their first real exposure to ChatGPT from ye olden days of 2023. In the first year of AI usage, homework scores went up significantly. But then final exam scores went down. Significantly below previous years’ averages. What was happening was obvious: students were using AI to get the homework done, not to understand the material.

When AI showed up as a shortcut, they took it. Of course they did.

Survival Mode

This is what I think about when I hear people express shock at AI misuse in schools and workplaces: of course. Of course students used AI to complete assignments instead of understanding concepts. Of course professionals are using it to generate reports they skim rather than synthesize. We are busy. We are overwhelmed. We are operating in systems built on competition — grades, performance reviews, market share. When a new tool arrives that lets us clear the pile faster, the very human thing to do is use it that way.

I am not exempt from this. I have used AI to draft things I could have written from scratch. Some of those drafts I should have written from scratch. There's a version of me at twenty-five, bone-tired and trying to keep up at a design firm, who would have used every available shortcut and not thought twice.

Survival mode isn't a character flaw. It's what you do under pressure in a system that rewards output over understanding. The first year (or the first time you try out a new system) is rarely the right year to judge. We're often messy and all-too-human when we do something new for the first time.

The Experiment that Works

The next year or so, Dr. Chen described a different experiment. Students were taught not to use AI to do their work, but to use AI to learn. They were shown how to use it to summarize dense research papers, to quiz themselves on material they needed to retain, to question the outputs the model returned rather than accept them. They were taught to keep their own cognitive horsepower running while AI handled the scaffolding.

The results that year: both homework scores and exam scores went up.

Not one or the other. Both.

Yes, the technology kept developing, but what changed was the relationship to the tools, with a better understanding of how to use them. The experiments helped clarify the final outcome: to learn, not simply to do faster homework. Once students moved from "AI does the work" to "AI helps me learn," the gap between homework performance and exam performance closed. They knew the material. They had used AI to know it more deeply, not to avoid knowing it at all.

The Act of Choosing

Earlier that morning, Stanford President Jonathan Levin had given us a state of the university. He shared a small anecdote about AI, and something groundbreaking that had just happened at Stanford the week before.

For one-hundred years, Stanford has operated on an honor code. When I went to school, there was no proctoring or oversight for exams. Students were trusted, and the system held. It was one of the things that made Stanford, Stanford.

Last week, students voted to change it. For the first time in a century, exams will be proctored.

My first instinct was to feel something like loss. The end of a certain kind of trust, a certain kind of innocence. But then I stayed with it, and I understood it differently.

The students had agency. It wasn’t imposed by the Stanford administration. They voted it in themselves, collectively, looking at the AI landscape honestly and deciding that the old honor system needed new infrastructure. They looked at a changed world and asked: what do we need now, to keep this fair?

When AI Opens Us Up

I've been coaching founders and executives, and the pattern in those early AI homework scores is one I know intimately from the work.The survival mode instinct kicks in when the stakes feel high and the tools are new. We always make the most mistakes when we’re trying something for the first time.

What I also know is that this is never the end of the story. There's a second phase, and it's the more interesting one. After the scramble and the shortcuts, something shifts. People start asking: wait, what am I actually optimizing for? That's when AI stops being a cheat and starts becoming something genuinely useful. The Stanford students found this. They changed course not because someone forced them, but because they cared about learning more than homework scores.

Most of us have lived that first year in some form.

Something in us wants to do the actual work. Not all of us, not always, not immediately. But it's there underneath the armor, underneath the overwhelm. And given the right conditions and a little time, it tends to find its way back to the surface.

What excites me isn't where we are with AI right now. It's where we're headed once we've gotten past the first year. That's when the real possibility opens up.